Smart machines, or artificial intelligence (AI), are tools that help computers think and learn like people. They make life easier by suggesting movies, driving cars, or even chatting with us. But how did this start, and why does it matter? Let’s explore in simple words.

Key Points:

- AI began as a dream in the 1950s, inspired by old stories of robots, and has grown into helpful everyday tools.

- Alan Turing is seen as the early father of AI for asking if machines could think, while modern experts like Geoffrey Hinton built on that.

- There are a few main types: some just react to the moment, others learn from the past, and future ones might understand feelings.

- In 2026, AI is getting better at working in teams and helping robots, but it’s not perfect yet.

- AI’s growth mirrors computers: both started big and clunky, then became small and everywhere, changing how we work and live.

- Research suggests AI could be part of the next big world change, like past shifts from steam power to computers, but some debate if it’s overhyped.

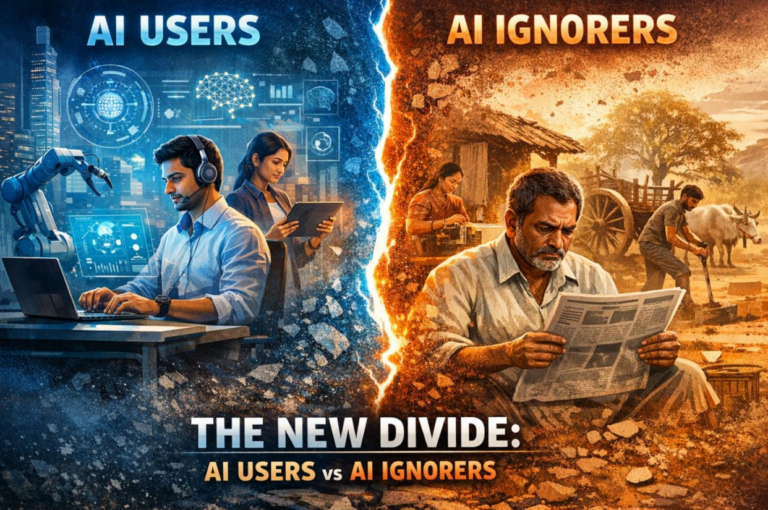

- Learning AI basics helps everyone stay ahead in jobs and make smart choices, as it touches daily life without replacing human creativity.

Advancement of AI: From Inception to Present | AI Art Generator

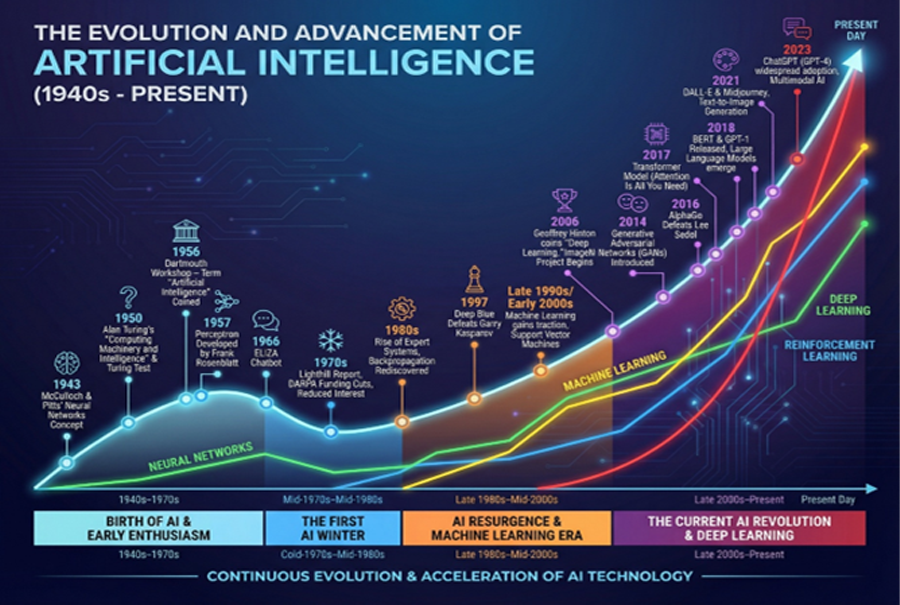

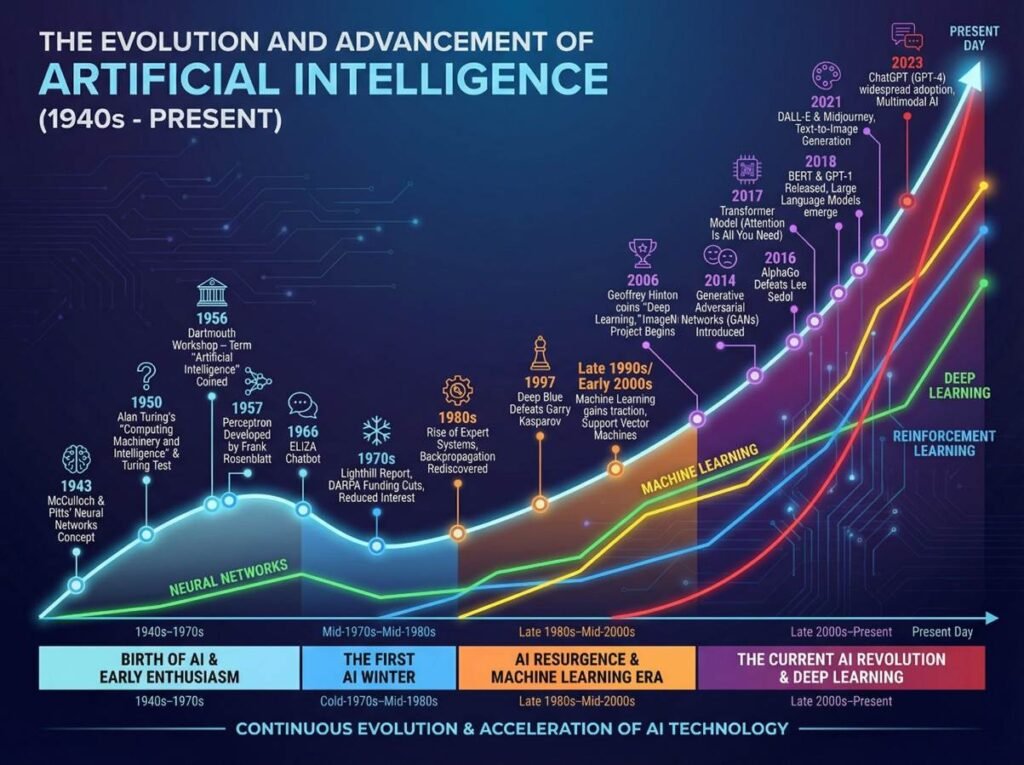

How AI Began: The story of AI starts with myths about living statues. In real life, it kicked off in 1950 when Alan Turing wondered if machines could think. He made a game to test it. By 1956, experts gathered to plan AI’s future. There were tough times when progress slowed, but better tech in the 2010s made AI boom.

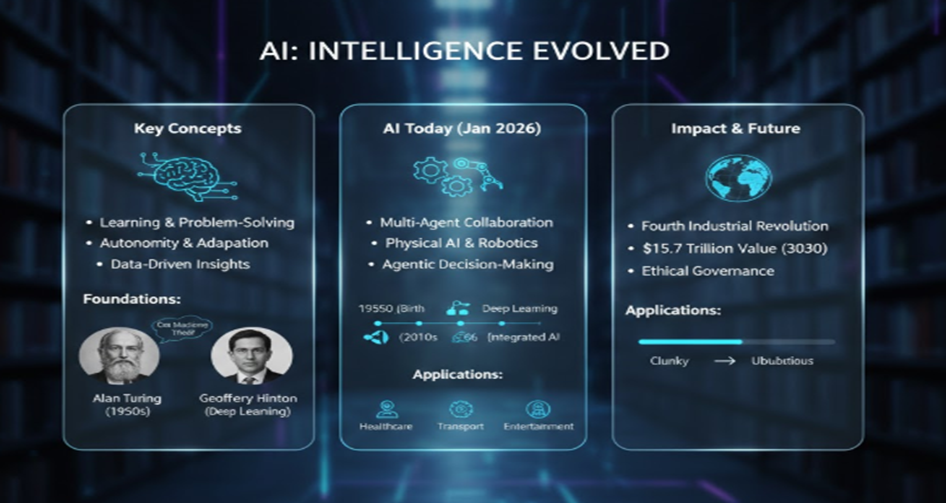

The Fathers of AI: Turing laid the groundwork with his ideas on thinking machines. Today, Geoffrey Hinton is called a modern father for teaching computers to learn from pictures and words.

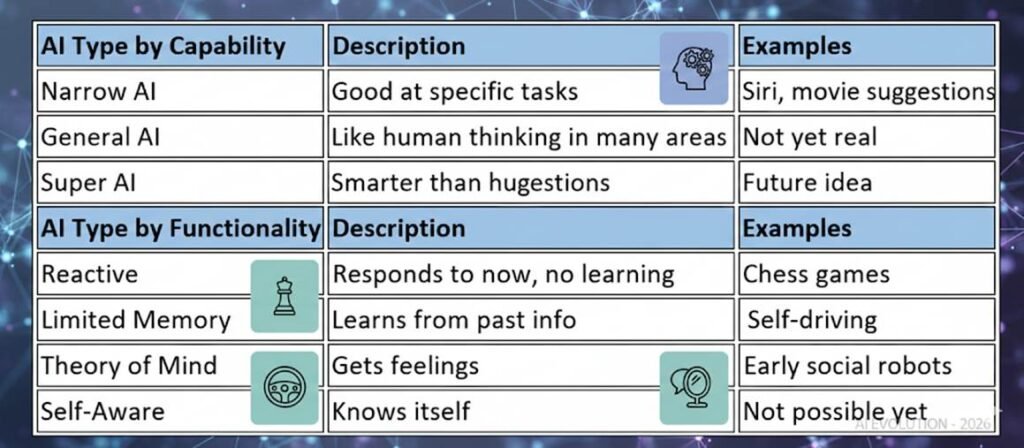

Different Kinds of AI: AI comes in types based on what it does. Reactive ones handle one thing now, like playing games. Memory ones learn from before, like self-driving cars. Others might one day get feelings or self-thoughts. Most today focus on single tasks.

However, the modern field emerged in the mid-20th century. In 1950, British mathematician Alan Turing published a seminal paper asking, “Can machines think?” He proposed the Imitation Game, later known as the Turing Test, to evaluate if a machine could exhibit human-like intelligence. This foundational question ignited interest. The official birth of AI as a discipline occurred in 1956 at the Dartmouth Conference, organized by John McCarthy, Marvin Minsky, and others, who coined the term “artificial intelligence” and envisioned machines simulating human reasoning. Early milestones included Arthur Samuel’s 1952 checkers program, which improved through self-play, introducing machine learning concepts. The 1960s saw chatbots like ELIZA, simulating conversations. Yet, the field endured “AI winters” in the 1970s and 1990s, periods of reduced funding due to unmet expectations and computational limitations. The 1980s brought expert systems, rule-based programs mimicking specialists, like MYCIN for medical diagnoses. The real surge came in the 2010s with deep learning, powered by vast data and advanced hardware, enabling breakthroughs like image recognition in 2012.

Alan Turing is widely regarded as the foundational “godfather” of AI for his theoretical contributions to computation and intelligence. His work during World War II on code-breaking machines, like the Enigma decoder, demonstrated practical applications of computational thinking. In contemporary terms, Geoffrey Hinton, a Nobel laureate in 2024, is often dubbed a “godfather” for pioneering deep learning techniques that underpin modern AI systems. Hinton’s warnings about AI’s risks, including potential existential threats, highlight ethical dimensions.

AI is classified in two primary ways: by capability and by functionality. By capability: Narrow AI (task-specific, like voice assistants), General AI (human-level versatility, theoretical), and Super AI (surpassing humans, hypothetical). By functionality: Reactive (no memory, e.g., chess programs), Limited Memory (learns from data, e.g., autonomous vehicles), Theory of Mind (understands emotions, emerging), and Self-Aware (conscious, speculative). Current applications predominantly use narrow, limited-memory AI for recommendations, automation, and predictions.

In 2026, AI trends include multiagent systems where AI entities collaborate on complex tasks, physical AI integrating with robotics for real-world applications, and agentic AI for autonomous decision-making. Advances like Apple’s reimagined Siri and quantum-AI fusions promise efficiency, but challenges like scaling and energy demands persist.

AI’s evolution parallels the computer revolution: Both began as specialized, cumbersome tools (e.g., 1940s room-sized computers like ENIAC) and became ubiquitous, democratizing access—computers to information, AI to intelligence. This shift has driven economic growth but also job transformations, requiring adaptation.

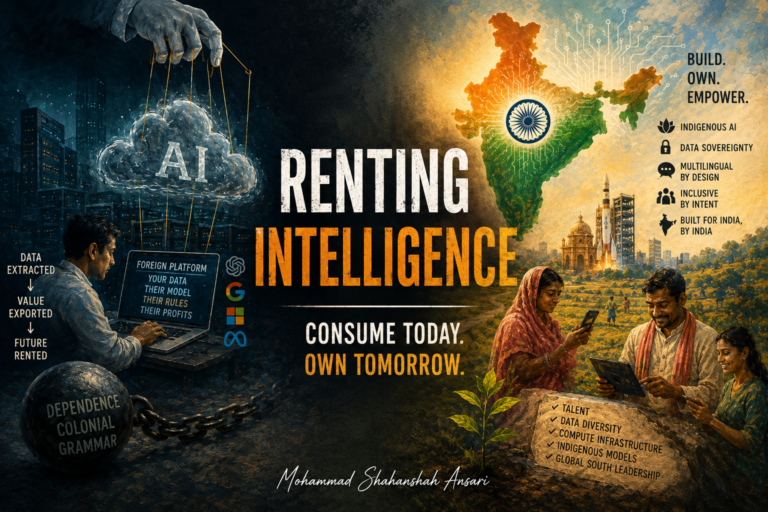

Evidence leans toward AI as a core element of the Fourth Industrial Revolution (4IR), also called Industry 4.0, which fuses digital, physical, and biological systems through technologies like AI, robotics, and IoT. Projections estimate $15.7 trillion in global value by 2030, but debates exist on whether it’s truly revolutionary or evolutionary, with risks like inequality necessitating ethical governance. Learning AI is essential for all, as it automates routine work, enhances problem-solving, and fosters innovation across fields. It empowers individuals to collaborate with technology, address biases, and remain competitive in a changing job market, much like literacy in the information age.